Learning with Diverse Communities Through Culturally Responsive Evaluation

This issue of GMNsight is co-produced byGEO (Grantmakers for Effective Organizations)

As Kathleen Enright rightly stated in the opening article of this issue: “Until all participants in the evaluation chain embrace and support a learning focus, I think our ability to use evaluation results to increase impact will be limited.” As grantmakers, we arguably play the most important role in ensuring that all stakeholders receive the support they need to be full participants, contributors, learners and beneficiaries of an evaluation process. For evaluation to be worth the money and time we spend on it, everyone needs to be a full participant — funders, grantee organizations and even the end beneficiaries, the people we all hope to help. But too often, evaluation discourages and disconnects people instead of engaging them. How can we make evaluation accessible to all?

In our case, through the Community Leadership Project, this has meant applying a culturally responsive lens to our work and how we learn from it. Since 2010, the James Irvine, William and Flora Hewlett, and David and Lucile Packard foundations have been collaborating on CLP, an initiative to build the capacity and sustainability of organizations serving low-income people and communities of color in California’s Central Valley, Central Coast and the Bay Area. These community organizations work on everything from counseling for African American men in rural communities to running classes on Mexican traditional dance to advocating for the occupational safety and rights of nail salon workers.

Since its launch, over 500 organizations have participated in CLP, from attending trainings to in-depth, multi-year projects that include technical and general support. As a complex, multi-faceted program, CLP presented many opportunities and challenges to learn about what worked and how to improve. Along the way, the funders commissioned Social Policy Research Associates (SPR) to conduct an ongoing and integrated evaluation of the initiative. Recognizing that inclusive learning leads to better results, their approach included data collection at every level of the initiative, including funders, intermediaries and community grantees. This evaluation allowed stakeholders to learn in real time and adapt the initiative to the needs of these mostly small and grassroots organizations.

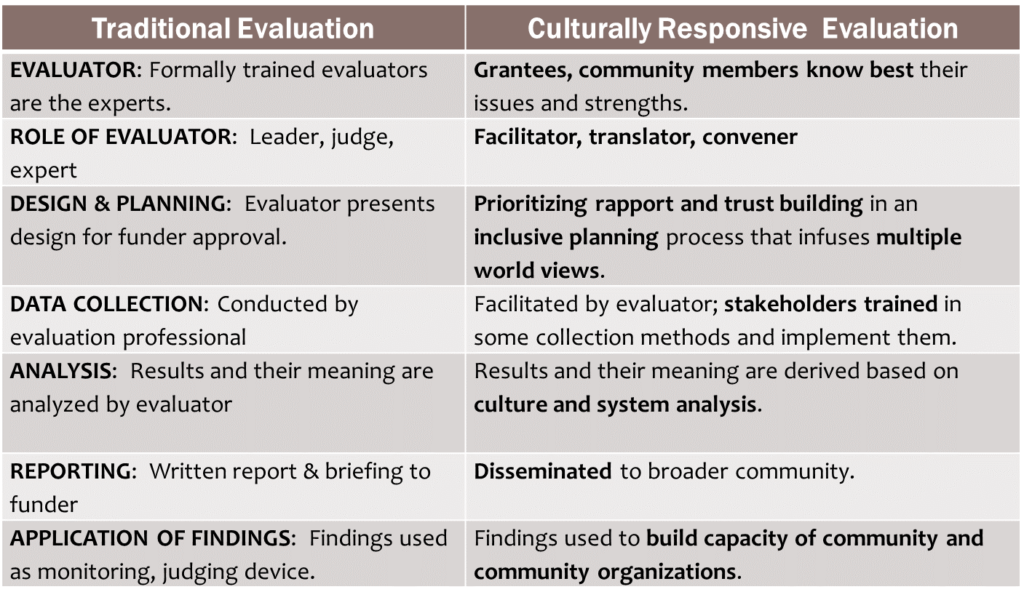

It is critical in an evaluation such as this one, to bring a culturally responsive lens to ensure that the diverse communities have their voices included in the evaluation. It is a process of shifting the traditional model of evaluators as “outsiders” producing reports, to evaluators as facilitators of learning and improvement. The differences between classical evaluation and culturally responsive evaluation is best summarized by the following table developed by Hanh Cao Yu of SPR for the Foundation Resource Guide on Commissioning Multicultural Evaluation:

In its fifth year, we’ve already drawn a number of lessons from the evaluation that we are using to evolve our work:

Lay the groundwork for successful evaluation

A successful evaluation takes full stakeholder participation, and in our case, lots of careful listening and input from our community grantees. Getting that kind of buy-in required being transparent through setting expectations and communicating upfront about participation in the evaluation process. Ideally, evaluation is embedded from the beginning of the project, not as an afterthought, so that key stakeholders can participate in shaping the evaluation. Solicit input from intermediaries, grantees and partners about the scope, purpose and level of data collection before finalizing the evaluation plan.

Fully support the evaluation and learning

We’ve heard from grantees that the amount of reporting and data funders expect from their grantees is increasing, without a comparable increase in resources. Based on feedback we received from the CLP community grantees, we built additional resources into grants to cover the extra time they would need to meaningfully engage in the learning and evaluation process. This funding was used for stipends to support time away from the office, travel, lodging, childcare and translation services when needed.

Meet your grantees where they are — geographically and organizationally

Community grantees in the Community Leadership Project are spread over a wide geographic area with extremely disparate contexts, from the agricultural Central Valley to technological Silicon Valley. To make the evaluation, and the whole initiative meaningful, we had to develop a more nuanced understanding of the unique challenges and assets in the different regions. This required building empathy for our grantees and understanding their contexts by regularly going to where they live and work. This philosophy of building closer relationships takes more time, but the results are worth it. Another way we were able to get more nuanced evaluation data was to not shy away from collecting rich qualitative data. For some grantees, this is one of the only ways they have the capacity to present what they’re learning. One effective activity was a series of regional Learning Labs that SPR organized, which gathered a number of grantees within an area to share stories and network.

Use common tools and a central platform for data sharing

In the first phase of the initiative, SPR and the funders had significant challenges in systematically collecting good grantee data. Grantees had different ways of collecting and reporting results, and with three funders, it was hard to track who had which pieces of information. As an evolution of the work, we started to use an online cloud-based project management program for coordination and information sharing. While not perfect, this solution allowed for building community through more inclusive and transparent communications with grantees. It also helped ensure everyone had the same data and reports, accessed a common calendar for coordinating convening, training activities and deadlines, and provided a platform for community grantees to showcase their results and their accomplishments.

Many of these lessons may seem obvious, and they are not unique to culturally responsive evaluation. However, they underscore the centrality of learning from grantees and sharing knowledge in different and at times nontraditional ways than those often prioritized by funders. We continue to hear from grantees that funder requirements and a lack of empathy can be significant challenges to a true partnership. For us, the above lessons reinforce for us the key tenets of good practices in culturally responsive evaluation. These include valuing and seeking the input and expertise of those who are closest to the community and meeting grantees where they are to lay the groundwork for the evaluation. We found that slowing down to build trust and rapport lays a stronger foundation for doing long-term work together. Finally, as funders, the results are more valuable and authentic when we provide the tools, platforms, and capacity building for community organizations to engage in data gathering, interpretation of findings and collective learning. This is the very essence of what it means, for us as funders, to embrace a learning focus to achieve deep and lasting community impact.

Content for this article was adapted from joint presentation materials developed in partnership with Hanh Cao Yu, Vice President of SPR and Michael Courville, Founder and Principal of Open Mind Consulting for GEO’s Learning Conference 2015 session on Learning with Diverse Communities through Culturally Responsive Evaluation. The Organizational Effectiveness program at the Packard Foundation helps organizations strengthen their fundamentals so they can focus on achieving their missions. The program funds current foundation grantees to help them build core strengths in areas like strategic and business planning, financial management, board and executive leadership, and communications. Further information on the Community Leadership Project can be found at: www.communityleadershipproject.org.