“This survey has gotten me to think about ways that I can improve our reporting templates. Rather than vaguely asking grantees to report on their progress toward their research aims, I think that I could improve our reporting templates by asking more directed questions…”

2017 Revisit Reporting Survey Comment

Like the funder above, many of us take grant reporting, well, for granted. The fact is: for grantmakers and grant recipients alike, the process can feel inevitable. Grantmakers make grants, recipients say thank you, and about a year later (sometimes much more frequently), the recipient says thanks again, and writes several pages of dry prose to tell what happened and account for where that money went. On the grantmaker’s side, a couple of folks read the reports, the relevant program officer might take some notes and ask a few questions, the grants management staff logs the submission into a database, and – assuming the money went where it was supposed to go – the grant is renewed or closed.

Ten years ago, Project Streamline’s first broad survey of application and reporting practices, Drowning in Paperwork, Distracted from Purpose, surfaced a few general facts about grant reporting:

- Most reports were submitted in hard copy.

- There was an enormous variability in reporting requirements, including number of reports required, report format, report requirements, and reporting timelines.

- Grantseekers complained that reporting, like application, was often not “right-sized” to the size, type, and risk profile of the grant.

- Grantseekers felt that frequent reporting schedules showed a lack of trust from their funders.

- Reports were used mainly for compliance purposes.

At that time, only 27 percent of funders indicated that they shared report information with others in the field, and only a few followed up regularly with grantees.

Over three weeks in September 2017, PEAK Grantmaking decided to Re-visit Reporting. Along with several colleague organizations, PEAK Grantmaking distributed and publicized an online survey to solicit grantmakers’ experiences with and aspirations for grant reporting. While technological advances have increased the use of online or emailed reporting over the last decade, 2017 survey respondents indicated similar variability in reporting requirements and, despite good intentions, similar limitations in how reports are used. Many survey respondents intend for reports to influence strategy, learning, future decision-making, effectiveness, and impact; yet, few grantmakers actually use reports for those purposes. Instead, many grantmakers default to basic compliance – a “checked box” to continue the funding or close the grant.

In January, PEAK Grantmaking shared the current state of grant reporting, based on more than 300 survey responses[1] from grantmaking organizations of all types and sizes. The 2017 survey found most using reports for accountability, documentation, and individual program officer learning. Reports are much less frequently used for highlighting grantee work publicly, whole organizational learning, board learning, and building the field through publications. Survey respondents noted inconsistency, even within a single grantmaking organization, in how reports are used: “in practice [reports] inform or are part of renewal decisions or they are just a formality. Some mix of useful and useless,” commented one respondent.

Only 6 percent of survey respondents do not require reports, which means an awful lot of grantees are dedicating resources to fulfilling reporting requirements and many, many grantmakers are dedicating resources to designing, redesigning, reminding, and recording submitted reports. We described the reality of reporting in our previous article; this article focuses on what we learned from our survey’s open-ended questions designed to highlight aspirations for grant reporting:

- If you could make one change to your foundation’s reporting processes and requirements, what would it be?

- If you could make one change to how your foundation uses the information gleaned from reports, what would it be?

Beyond these questions, responses in each of the survey’s comment sections suggested barriers that may be keeping intentions from aligning with reality. Sharing aspirations and common challenges can be the first step toward reporting that works better for both grantmakers and grant recipients.

The Changes You’d Make

According to the 2017 Revisit Reporting survey, reporting is both strangely inevitable and frustratingly ineffective – or at least not as effective, strategic, and informative as survey respondents would like. Responses emphasized limited capacity (mostly time and attention) while suggesting structural, even cultural barriers to improved practice. Suggested changes in process and requirements fell into the following categories:

Most of the responses (42) mentioned shorter-streamlined formats and requirements. One comment captured this sentiment saliently – and likely on behalf of both the grantmaker and the grant recipient: “Decrease the extent of the budgetary fiscal reporting… it’s nine worksheets feeding into a summary sheet and, in my opinion, way too detailed!”

Beyond shorter reports, survey respondents suggested increased flexibility, consistency, better timing, and better online reporting capacity. Only 3 respondents suggested eliminating reports altogether. It seems significant that the most frequently mentioned changes connect “process and requirements” to “how the reports are used.” These form-meet-function mentions include: align requirements with what’s useful (38); develop taxonomy to aggregate (11); combine report with renewal (4), and three exceptionally blunt suggestions to simply: use the reports.

Seems straightforward, right? Well, perhaps not.

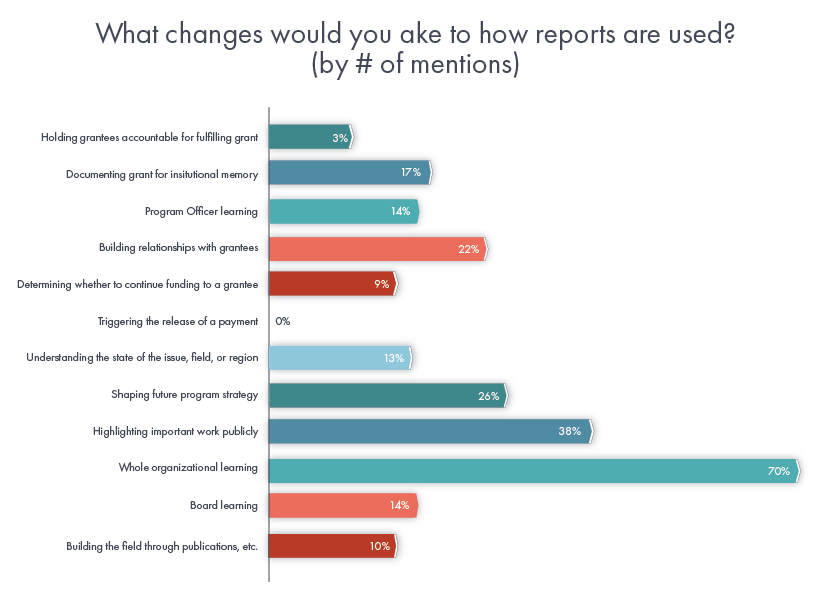

To get a handle on the hundreds of “changes in use” suggestions, we attributed each comment’s main idea to one or more of the “use categories” presented earlier in the survey. Additional use categories were added when a comment did not fall easily within those presented. As a reminder, on average, respondents’ current uses of reporting can be ordered, from most frequent to least frequent, this way (for more detail on the data presented in this chart, review our previous article on the survey data):

Survey respondents offered a variety of changes they believe would make better use of reports:

Several things are notable about the changes that survey respondents would most like to make, especially when considered in light of the previous chart showing actual use of reports. First, and notably, comments about the more tactical functions indicate that reporting is not always meeting even its most commonly stated purposes:

- documenting for institutional memory (17)

- board learning (14),

- building relationships with grantees (22)

- program officer learning (14), and

- determining whether to continue funding a grantee (9).

That these practices are not happening with regularity suggests missed opportunities. Again, time and attention appear to be the greatest barrier to using reports for these purposes. For example, one respondent admitted: “…I do think we program officers could do more with our reports that would be more helpful to us and to the grantees.” Another response aspired to use reporting for board learning, and in doing so, surfaced a barrier very frequently mentioned by respondents seeking both practical and far-reaching changes in the use of reports: “Figure out how to efficiently and with little or no pain, mix apples, oranges and maple syrup into something meaningful for staff and board.” The key finding here is that reports contain a lot of raw information, but this information is not easily translated into something illustrative or meaningful for stakeholders.

Secondly, most suggested changes signaled the desire for higher level or strategic uses for reporting: learning, communications, strategy development, and relationship building. The aspiration that reports contribute to whole organization learning (70) was mentioned most frequently as a change that respondents would like to make. One response suggested: “Have a documented rationale for why we are collecting specific information, work collaboratively across teams to make better use of the information…”

Other similarly ambitious changes were voiced: to shape future program strategy (26) or understand the state of the issue, field, or region (13). These uses would align reporting with overall organizational strategy and mission. Others linked reports to external influence and the wish to use reports to highlight important work publicly (38) and build the field through publications or other public offerings (10); for example: “We are exploring how to work with our grantees to tell their stories in ways that help us advocate within philanthropy for what and how we fund.”

Beyond format changes, aspirations for reporting speak to a larger challenge: how to analyze or aggregate individual grant reports into salient, applicable actions across portfolios, strategies, and fields. According to the survey, evaluating a portfolio, strategy, organization, or field (when it happens) is often assigned to external consultants. Often, such efforts are pursued apart and away from individual grant reports. Many of the suggested changes would require reports (or report data) to be persuasively synthesized and communicated – in other words, learning “to mix apples, oranges and maple syrup into something meaningful.”

What types of tools, resources, or training would help?

Several ideas emerged consistently throughout responses to the question, “What types of tools, resources, or training could PEAK Grantmaking provide to help your organization use reporting most effectively?”

Not surprisingly, survey respondents are looking to PEAK Grantmaking for templates, sample reporting forms, and right-sized reporting that can reflect the wide range of size and type of grants being made. For example, respondents wondered how grantmakers adjust reporting guidelines for capital grants, operating support, or a one-time $5,000 grant vs. a multi-year $1M grant. In this Journal, PEAK Grantmaking will share profiles of grantmakers moving away from the one-size-fits-all, one-deadline-fits-all reporting that has been the status quo.

Let’s be clear, though: whole organization learning and field-building are no easy feats. Entire journals, manuals, affinity groups, and consulting practices focus on these goals. Even within one organization, whole organization learning would tap executive, programmatic, governance, administrative, and financial actors. Coordinating and communicating across these diverse individuals and functions would require more intentional reporting questions and formats, an audience eager to receive this learning, and the dedicated time for these conversations. Considered in today’s reporting context, this and other suggested changes would require grantmakers to learn and prioritize new ways of working and learning together, both internally and externally.

PEAK Grantmaking is already a source for stories about new ways of working and learning. For example, grantmakers, like the Bush Foundation, are using grant reports to develop practitioner “knowledge bases” that attract peers and policymakers. Projects like this invest significant time and resources dedicated to relationships among their own grants management, communications, and program staff and, even more so, among their grant recipients.

Investing in changes like this would likely result in more effective and useful reporting practice – perhaps in even greater, more scalable impact. Yet the significant structural and cultural implications embedded in the changes proposed by survey respondents may be the greatest barrier to realizing them. To fully grapple with whole organization learning would require both know-how and want-to – bandwidth currently invested on the front end in both choosing grant recipients and planning strategies.

What next?

Among grantmakers throughout the field, reporting has undergone the significant sea change of moving online. Funders have made many tweaks, from minor to major, to their reporting processes, including less frequent and more streamlined requirements, more focused metrics, and better systems for managing the piles of reports that accumulate. Such changes are impressive and help to streamline grantmaking and improve outcomes (profiles of innovative and streamlined practice to come as part of this journal). But, survey respondents were united in a deeper desire to be even better and to do more. A sense of frustration with colleagues’ apathy toward reporting and unease about current practices permeated the survey.

Happily, one last change suggested by survey respondents may be the best salve: 22 respondents articulated their desire to see reporting used to build relationships with grantees. Neither the most frequent nor the most grandiose of aspirations, this change is nevertheless entirely within the grasp of anyone who interacts with grant reports. Building relationships with grantees is time intensive. Unless a renewal is in the works, using grant reports to check back in with grantees would run counter to the pre-grant bias embedded in most grantmaking structures and schedules. And, in this, we may find reporting’s most valuable quality: using grant reports to build relationships with grantees disrupts the grant pitch/approve-or-decline dynamic which so often thwarts healthy grantmaking relationships.

Talking about a report or, better still, having a conversation with grantees instead of a written report – which several survey respondents are doing! – allows grantmakers to focus on what has already been achieved together. Distinct from a grant request, grant reports, by definition, emphasize a common vision through reflection and celebration.

Respondentswantto connect reporting to whole organization learning and a lot of other impact- and mission-driven behaviors. That’s great news – and starting with this journal, PEAK Grantmaking will be offering ways to identify and remove barriers to better reporting practices. But, in the meantime, starting right now: why not spare a few minutes to revisit reporting with one or a few of your grant recipients? Use a submitted grant report (or waive the written report altogether) to begin a different kind of conversation with grant recipients. In other words, don’t let larger, more complex aspirations keep you from investing in a relationship which is both within your reach and, after all, at the very heart of strategy and impact. Maybe together, you and your grant recipients will mix apples, oranges, and maple syrup into a meaningful reporting recipe we’ll all find tasty!

Previous articles and future articles will offer some good reasons to revisit reporting, while highlighting grantmakers making better use of reporting. This journal issue will conclude with five quick, buzz-worthy recommendations for improving reporting right now.

Join the discussion! Do these findings sound right to you? What can PEAK Grantmaking do to help?

[1] Survey Sample: The findings that follow are drawn from 304 completed surveys from representatives from grantmaking organizations across the country. Multiple submissions were received from 24 organizations, resulting in survey responses from a total of 280 unique organizations. About half described themselves as grants management professionals. Another 25 percent were program officers or senior program staff (Director, VP, or the like). The final 25 percent included CEO/EDs, directors of finance or operations, communications staff, and administrative assistants or equivalent. 84 percent indicated responsibility for ensuring compliance; more than 70 percent play a role in designing reports. While 64 percent review grants for substantive insight and impact, 50 percent or fewer indicated responsibility for using reports for learning, stories, and data; for evaluating grant success; or for follow-up conversations with grantees. Grantmakers responding to the survey varied widely in size and type, but most were either private independent or family foundations. 60%+ distribute more than $5 million in grants annually. About 33 percent give more than $25 million in grants each year. Staff-wise, responses represented the variety in the field, ranging from single staffed funders to funders with more than 76 staff. About half indicated fewer than 10 staff.